Understanding Kubernetes and Its Advantages

In the ever-evolving world of software development and IT operations, Kubernetes has emerged as a pivotal technology for managing containerized applications at scale. Whether you're a developer, a system administrator, or an IT manager, understanding Kubernetes can provide significant advantages for your projects and operations.

What is Kubernetes?

Kubernetes, often abbreviated as K8s, is an open-source platform designed to automate the deployment, scaling, and operation of application containers across clusters of hosts. Originally developed by Google and now maintained by the Cloud Native Computing Foundation (CNCF), Kubernetes has become the de facto standard for container orchestration.

At its core, Kubernetes aims to simplify the complex tasks associated with container management. It provides a robust framework to run distributed systems resiliently, with automated scaling, failover, and load balancing.

Key Components of Kubernetes

To understand Kubernetes better, it’s essential to familiarize yourself with its key components:

- Nodes: The physical or virtual machines that run your applications and workloads. There are two types of nodes:

- Master Node: Manages the Kubernetes cluster, coordinating activities like scheduling, scaling, and upgrading.

- Worker Node: Runs the containers and workloads.

- Pods: The smallest deployable units in Kubernetes, consisting of one or more containers that share storage, network, and a specification for how to run the containers.

- Services: Define a logical set of pods and a policy to access them. Kubernetes services enable communication between different parts of your application and external traffic.

- Volumes: Provide a way for containers in a pod to share data and persist data beyond the lifecycle of individual containers.

- Namespaces: Allow for isolation of resources and management of multiple environments within a single Kubernetes cluster.

- Deployments: Describe the desired state for your application, such as which images to use for your containers, the number of pod replicas, and update policies.

Advantages of Kubernetes

Kubernetes offers numerous benefits that make it an attractive choice for managing containerized applications:

1. Scalability

Kubernetes can automatically scale your applications up and down based on demand. This ensures optimal resource utilization and can handle traffic spikes without manual intervention.

2. High Availability and Fault Tolerance

Kubernetes ensures your applications are always available. If a container or pod fails, Kubernetes will restart it, move it to another node, or replace it as needed. This self-healing capability minimizes downtime and disruptions.

3. Portability

By abstracting away the underlying infrastructure, Kubernetes allows you to run your applications consistently across different environments, whether on-premises, in the cloud, or in a hybrid setup. This portability is crucial for avoiding vendor lock-in.

4. Efficient Resource Utilization

Kubernetes optimizes the use of resources by dynamically allocating them based on the current needs of your applications. This leads to cost savings and improved performance.

5. Declarative Configuration

With Kubernetes, you declare the desired state of your applications using simple YAML or JSON files. Kubernetes continuously works to maintain this state, making deployments and updates predictable and repeatable.

6. Extensibility

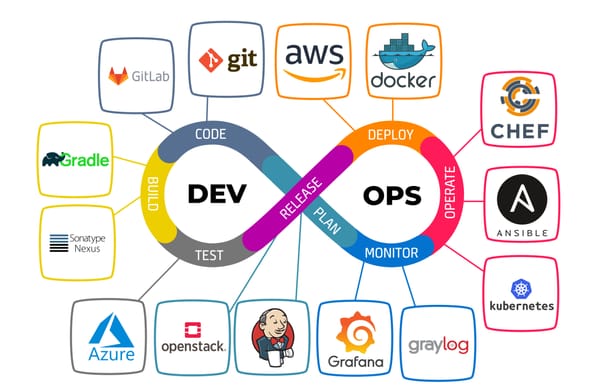

Kubernetes is highly extensible, allowing you to integrate with various third-party tools and custom plugins. This flexibility ensures you can tailor the platform to your specific needs.

7. Robust Ecosystem

Kubernetes boasts a vibrant ecosystem of tools and services. From monitoring and logging to security and networking, there's a wide array of solutions available to enhance and extend your Kubernetes environment.

Kubernetes Components

When you deploy Kubernetes, you get a cluster.

A Kubernetes cluster consists of a set of worker machines, called nodes, that run containerized applications. Every cluster has at least one worker node.

The worker node(s) host the Pods that are the components of the application workload. The control plane manages the worker nodes and the Pods in the cluster. In production environments, the control plane usually runs across multiple computers and a cluster usually runs multiple nodes, providing fault-tolerance and high availability.

This document outlines the various components you need to have for a complete and working Kubernetes cluster.

Control Plane Components

The control plane's components make global decisions about the cluster (for example, scheduling), as well as detecting and responding to cluster events (for example, starting up a new pod when a Deployment's replicas field is unsatisfied).

Control plane components can be run on any machine in the cluster. However, for simplicity, setup scripts typically start all control plane components on the same machine, and do not run user containers on this machine. See Creating Highly Available clusters with kubeadm for an example control plane setup that runs across multiple machines.

kube-apiserver

The API server is a component of the Kubernetes control plane that exposes the Kubernetes API. The API server is the front end for the Kubernetes control plane.

The main implementation of a Kubernetes API server is kube-apiserver. kube-apiserver is designed to scale horizontally—that is, it scales by deploying more instances. You can run several instances of kube-apiserver and balance traffic between those instances.

etcd

Consistent and highly-available key value store used as Kubernetes' backing store for all cluster data.

If your Kubernetes cluster uses etcd as its backing store, make sure you have a back up plan for the data.

You can find in-depth information about etcd in the official documentation.

kube-scheduler

Control plane component that watches for newly created Pods with no assigned node, and selects a node for them to run on.

Factors taken into account for scheduling decisions include: individual and collective resource requirements, hardware/software/policy constraints, affinity and anti-affinity specifications, data locality, inter-workload interference, and deadlines.

kube-controller-manager

Control plane component that runs controller processes.

Logically, each controller is a separate process, but to reduce complexity, they are all compiled into a single binary and run in a single process.

There are many different types of controllers. Some examples of them are:

- Node controller: Responsible for noticing and responding when nodes go down.

- Job controller: Watches for Job objects that represent one-off tasks, then creates Pods to run those tasks to completion.

- EndpointSlice controller: Populates EndpointSlice objects (to provide a link between Services and Pods).

- ServiceAccount controller: Create default ServiceAccounts for new namespaces.

The above is not an exhaustive list.

cloud-controller-manager

A Kubernetes control plane component that embeds cloud-specific control logic. The cloud controller manager lets you link your cluster into your cloud provider's API, and separates out the components that interact with that cloud platform from components that only interact with your cluster.

The cloud-controller-manager only runs controllers that are specific to your cloud provider. If you are running Kubernetes on your own premises, or in a learning environment inside your own PC, the cluster does not have a cloud controller manager.

As with the kube-controller-manager, the cloud-controller-manager combines several logically independent control loops into a single binary that you run as a single process. You can scale horizontally (run more than one copy) to improve performance or to help tolerate failures.

The following controllers can have cloud provider dependencies:

- Node controller: For checking the cloud provider to determine if a node has been deleted in the cloud after it stops responding

- Route controller: For setting up routes in the underlying cloud infrastructure

- Service controller: For creating, updating and deleting cloud provider load balancers

Node Components

Node components run on every node, maintaining running pods and providing the Kubernetes runtime environment.

kubelet

An agent that runs on each node in the cluster. It makes sure that containers are running in a Pod.

The kubelet takes a set of PodSpecs that are provided through various mechanisms and ensures that the containers described in those PodSpecs are running and healthy. The kubelet doesn't manage containers which were not created by Kubernetes.

kube-proxy

kube-proxy is a network proxy that runs on each node in your cluster, implementing part of the Kubernetes Service concept.

kube-proxy maintains network rules on nodes. These network rules allow network communication to your Pods from network sessions inside or outside of your cluster.

kube-proxy uses the operating system packet filtering layer if there is one and it's available. Otherwise, kube-proxy forwards the traffic itself.

Container runtime

A fundamental component that empowers Kubernetes to run containers effectively. It is responsible for managing the execution and lifecycle of containers within the Kubernetes environment.

Kubernetes supports container runtimes such as containerd, CRI-O, and any other implementation of the Kubernetes CRI (Container Runtime Interface).

Addons

Addons use Kubernetes resources (DaemonSet, Deployment, etc) to implement cluster features. Because these are providing cluster-level features, namespaced resources for addons belong within the kube-system namespace.

Selected addons are described below; for an extended list of available addons, please see Addons.

DNS

While the other addons are not strictly required, all Kubernetes clusters should have cluster DNS, as many examples rely on it.

Cluster DNS is a DNS server, in addition to the other DNS server(s) in your environment, which serves DNS records for Kubernetes services.

Containers started by Kubernetes automatically include this DNS server in their DNS searches.

Web UI (Dashboard)

Dashboard is a general purpose, web-based UI for Kubernetes clusters. It allows users to manage and troubleshoot applications running in the cluster, as well as the cluster itself.

Container Resource Monitoring

Container Resource Monitoring records generic time-series metrics about containers in a central database, and provides a UI for browsing that data.

Cluster-level Logging

A cluster-level logging mechanism is responsible for saving container logs to a central log store with search/browsing interface.

Network Plugins

Network plugins are software components that implement the container network interface (CNI) specification. They are responsible for allocating IP addresses to pods and enabling them to communicate with each other within the cluster.

Conclusion

Kubernetes has revolutionized the way we deploy and manage containerized applications. Its ability to automate complex tasks, ensure high availability, and provide a consistent environment across different infrastructures makes it an indispensable tool for modern IT operations. By adopting Kubernetes, organizations can achieve greater efficiency, scalability, and resilience in their software delivery processes.

Whether you're just starting with containers or looking to optimize your existing container management practices, Kubernetes offers the features and flexibility needed to meet your goals. As the technology continues to evolve, embracing Kubernetes will likely be a key factor in staying competitive in the dynamic landscape of software development and operations.